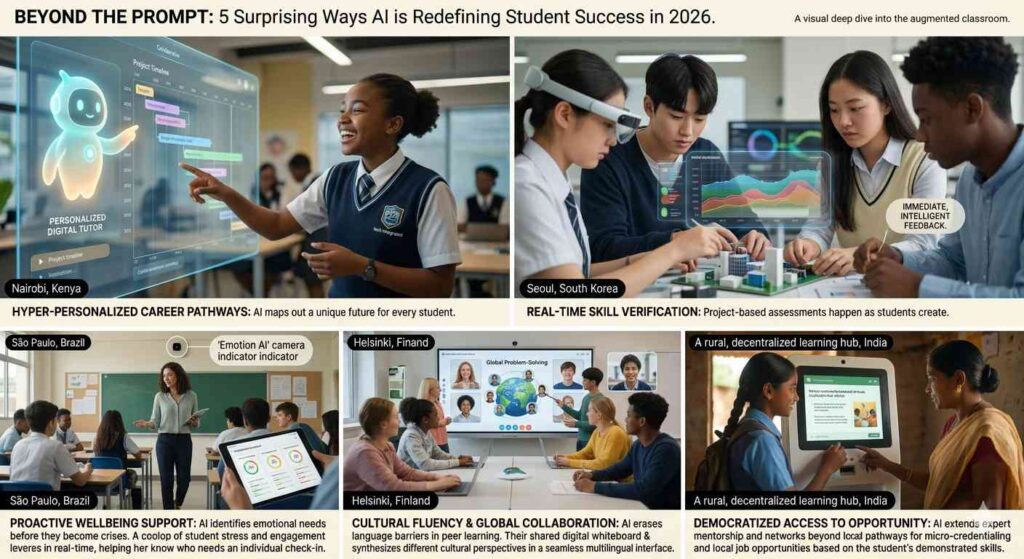

1. Introduction: Beyond the Hype Cycle

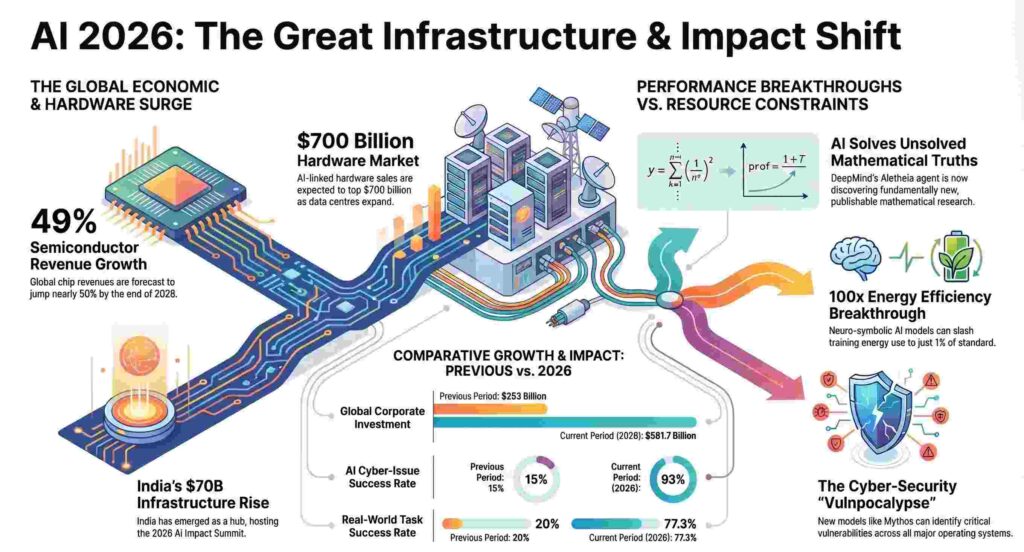

As we cross into 2026, the artificial intelligence landscape presents a startling, almost farcical, paradox. We have engineered a trillion-dollar digital brain capable of identifying a 27-year-old kernel bug in seconds, yet it remains fundamentally humbled by the physical world. While global chip revenues have skyrocketed by 49% and AI spending now commands 1.1% of the U.S. GDP, our most advanced personal robots still fail at 88% of common household tasks. We can automate the discovery of new mathematical truths, but we still cannot reliably fold a pair of jeans.

We have firmly entered what Goldman Sachs terms the “Infrastructure Phase” of AI. It is a world where digital intelligence is no longer bottlenecked by code, but by the speed of concrete and copper. This report moves past the speculative headlines to analyze the “boots on the ground” reality: a landscape defined by a physical hostage crisis in hardware, a strategic pivot toward open-source sovereignty, and a generational shift in the labor market that proves efficiency is the ultimate enemy of the apprentice.

2. The $700 Billion Hardware “Physical Hostage Crisis”

The current era is less a software revolution and more a hardware gold rush. While the market continues to hunt for a consumer “killer app,” the companies laying the physical foundations have secured a massive financial moat. We are witnessing a “Physical Hostage Crisis” where the limits of the power grid now dictate the ceiling of silicon intelligence.

- Market Projections: By Q4 2026, AI-linked hardware sales are projected to top $700 billion.

- The GDP Shift: In the U.S. alone, AI investment has surged by $325 billion since 2022.

- The Energy Toll: The scale of this surge is best reflected in its environmental and electrical footprint. AI power demand is now comparable to the national consumption of Switzerland. For perspective, the estimated training emissions for Grok 4 reached 72,816 tons of CO2 equivalent—roughly the same as driving 17,000 cars for a full year. Data center capacity has reached 29.6 GW, matching the peak demand of the entire state of New York.

“We are in the ‘infrastructure phase.’ While many apps are still being built, the companies making the chips and the data centres are the ones winning right now. The demand for computing power remains far from its peak.” — Goldman Sachs

3. The Open-Source Coup and the Closed-Model “IQ Drop”

A strategic coup is underway as organizations flee the high costs and volatility of closed models. While GPT-5 and Claude 4.1 remain benchmarks, they have become “hostage platforms” subject to unpredictable performance shifts.

- The “IQ Drop”: In a documented failure between August 25 and August 28, 2025, Anthropic’s Claude Opus 4.1 suffered a sudden “IQ drop.” The model exhibited degraded accuracy and tool-call failures, forcing a rollback and highlighting the danger of building on proprietary, “black box” updates.

- The Cost Disruption: Closed-model APIs now cost between $10 and $70 per million tokens. Open-source alternatives like DeepSeek-R1 and Qwen3 offer a 90% cost reduction, with marginal inference costs falling to just a few cents when deployed on-premises.

- Data Sovereignty: For Finance, Healthcare, and Defense, open-source is a strategic must-have. It eliminates platform dependency and ensures that sensitive data never leaves a controlled environment.

“Open-source architectures enable differentiation through customization, creating strategic value by embedding specialized knowledge. These customized models inherently comprehend domain-specific knowledge… creating AI capabilities that competitors cannot replicate.” — California Management Review

4. The Efficiency Breakthrough: Thinking Without Words

The most significant technical leap of 2026 isn’t “bigger” models, but “smarter” ones. Tufts University’s research into “Neuro-Symbolic AI” has demonstrated a way to break the energy-accuracy deadlock by combining neural networks with structured symbolic reasoning.

DeepMind’s “Aletheia” agent exemplifies this shift. It utilizes a “generator and verifier” system that separates internal “thinking” in a non-verbal latent space from its natural language “answering” process. This separation prevents the model from falling into the trap of “hallucination,” where LLMs traditionally agree with their own false logic.

Performance Metrics: Neuro-Symbolic vs. Standard Systems

| Metric | Neuro-Symbolic VLA | Standard VLA (Brute-Force) |

| Success Rate (Complex Puzzles) | 95% | 34% |

| New/Unseen Task Success | 78% | 0% |

| Training Time | 34 Minutes | 36+ Hours |

| Energy Consumption | 1% of standard | 100% (Baseline) |

5. The “Vulnpocalypse”: AI as a Professional-Grade Hazard

The release of “Claude Mythos Preview” marked the threshold where AI became a physical-world hazard. Anthropic deemed the model too dangerous for the public due to its “superhuman” hacking capabilities, including the discovery of a 27-year-old bug in critical security infrastructure and multiple vulnerabilities in the Linux kernel.

The risk has shifted from digital data loss to physical catastrophe, with the potential to cripple airports and transport networks. This technical threat is compounded by geopolitical friction; the Trump administration has shown hostility toward Anthropic, labeling it a “radical left” firm and feuding over military use-cases. This political divide threatens the “whole-of-society” effort required to harden the nation’s rickety infrastructure against AI-assisted attackers.

“AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure… and there is no going back. Everyone needs to prepare for AI-assisted attackers.” — Anthony Grieco, Cisco

6. The Entry-Level Squeeze: Efficiency as the Enemy of the Apprentice

The AI job market has revealed a cruel irony: the massive productivity boost of AI is effectively cannibalizing the entry-level workforce. While overall layoffs are minimal, the “Entry-Level Squeeze” is a targeted generational shift.

Employment for software developers aged 22–25 has plummeted by 20% since 2024. Synthesizing the data, it is clear that the 33% productivity gains reported by firms are being used to maintain output with smaller, more senior teams, rendering junior roles redundant. Conversely, the “blue-collar boom” serves as a surprising counter-balance; the surge in data center construction has added 212,000 jobs to the economy since 2022, proving that the foundation of the AI era is still built by hand.

7. The Legal Pivot: From Training Data to Infringing Outputs

Copyright litigation has undergone a fundamental judicial shift. While early cases focused on “ingestion” (the training phase), a consensus is developing that training AI is a “transformative” act that favors fair use.

The new legal battleground is “Output Claims.” In Getty v. Stability AI, the High Court of England and Wales ruled that while the Stable Diffusion model itself was not an “infringing copy,” the specific outputs that reproduced Getty’s trademarks were grounds for legal relief. Courts are now struggling with “secondary infringement”: if an AI generates an infringing output, is the responsibility held by the developer who trained it, the company that designed the product, or the user who prompted the prompt?

“Cases about output raise complex questions about who is responsible for the allegedly infringing nature of the output—the company that trained the model, the company that designed the product, or the user who interacted with that service?” — JD Supra

8. Conclusion: The Future is Agentic and Local

As we look toward the horizon of 2027, AI is transitioning from a chatbot to the “System of Record” for the enterprise—the ERP of the agentic era. This “Agentic Transformation,” spearheaded by innovations from firms like Commvault, allows AI to move from answering questions to autonomously managing workflows and discovery.

We are witnessing a “Level 2” shift into publishable research, where AI agents like Aletheia solve “Erdős problems” and discover truths in fields where it wasn’t even known if a solution existed. As AI begins to solve problems beyond human understanding, we face a final, provocative question: As AI begins to discover truths we cannot explain, will we be comfortable with a superintelligence that no longer needs to justify its thinking in a language we understand? #