It’s Tuesday morning. A brief lands on your desk: ten custom ads are due by Friday, but there is no production crew, no studio, and no travel budget. Just yesterday, this scenario would have triggered immediate structural panic. Today, however, you’ve crossed the Rubicon. You open a high-speed generative engine, input a prompt, and watch a finished, high-fidelity clip materialize before your morning coffee has even cooled.

In this new landscape, speed has officially become the primary creative currency. We are witnessing the total collapse of the traditional barriers to professional video—expensive hardware and grueling manual post-production. The era of the “manual editor” is dying, replaced by a “Director-Operator” model where the ability to navigate latent space and iterate is more valuable than the ability to operate a camera.

1. Speed Over Perfection: The Rise of “Iteration Loops”

In 2026, “iteration speed” has surpassed traditional rendering as the critical metric for creative success. The objective is no longer the perfect single render; it’s the rapid-fire sprint from prompt to publish. This shift allows teams to produce ten variations in an afternoon rather than one polished video in a week.

Tools like Leonardo AI and Jitter are leading this “blink-of-an-eye” workflow. Leonardo’s Motion 2.0 generator delivers HD clips in the time it takes to reply to a Slack message. More importantly, it provides technical depth via “Motion Control,” allowing directors to lock camera pans or apply a slow zoom without wrestling with keyframes or diffusion bottlenecks. Jitter complements this by transforming static product shots into animated social assets in seconds, enabling a live, collaborative demo atmosphere during client calls.

“Creative directors want options, not excuses.”

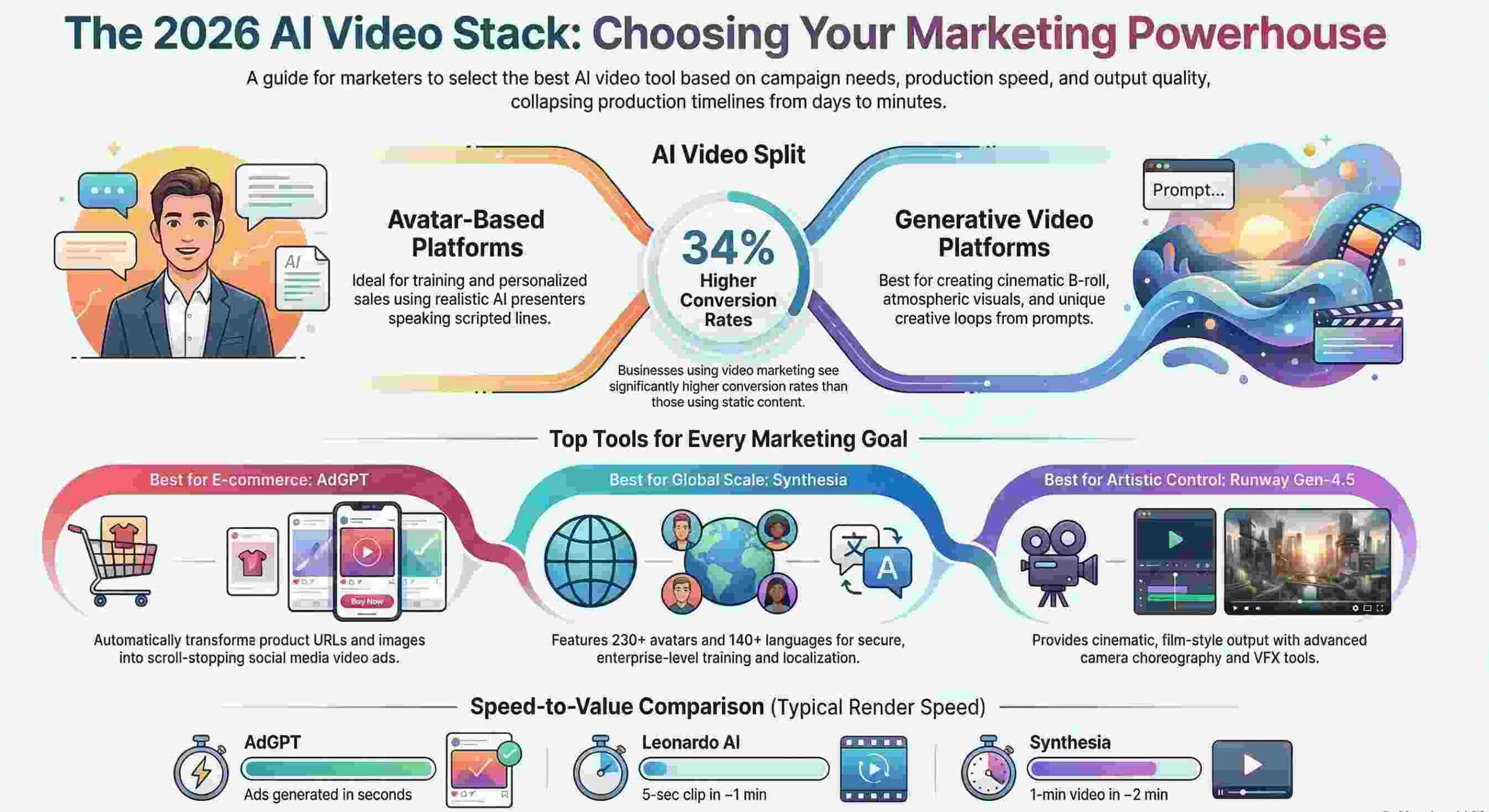

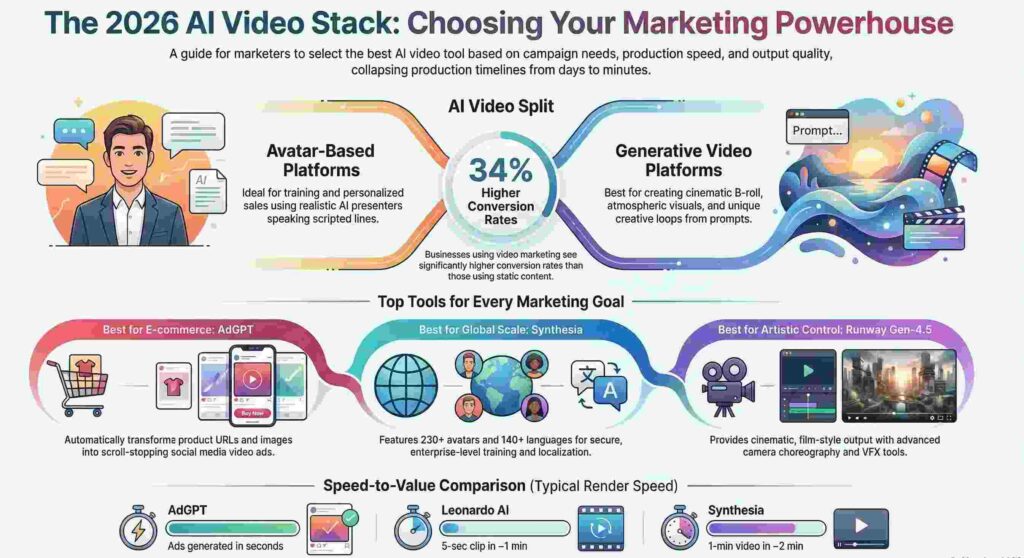

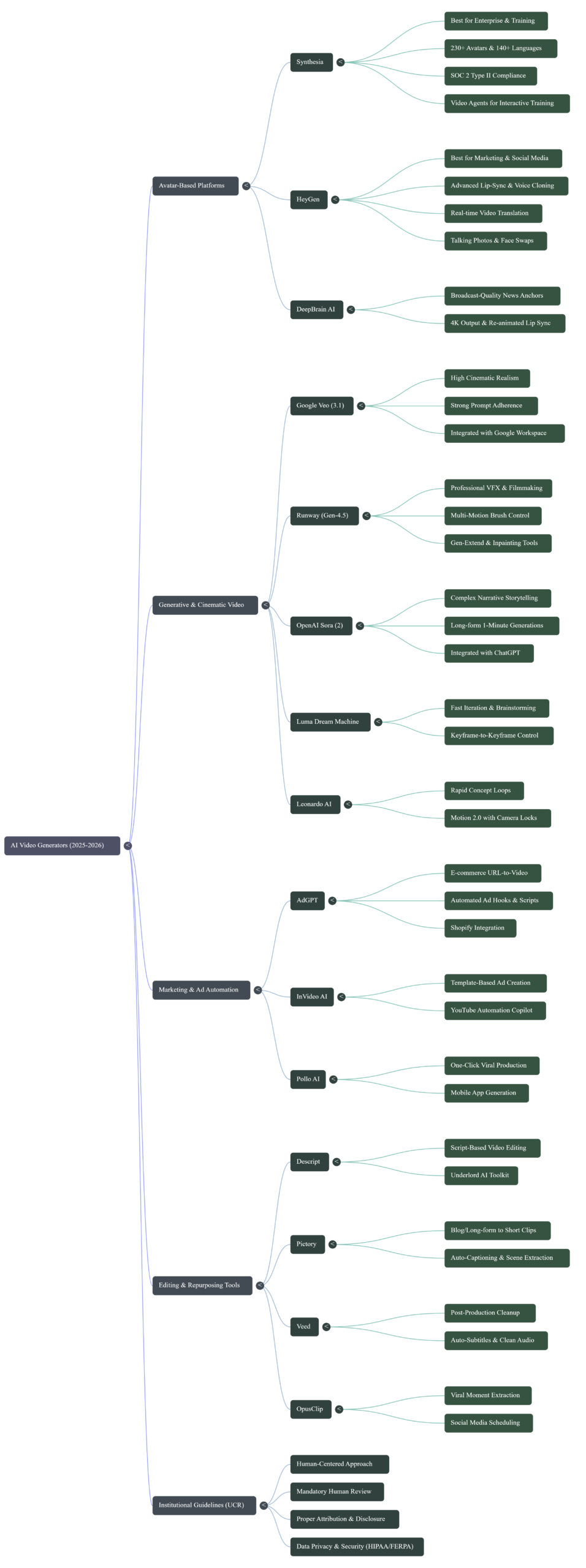

2. The Great Bifurcation: Avatars vs. Generative Cinema

AI video has diverged into two specialized technological paths, each serving a distinct market need. Based on recent analysis, the choice between them is now a foundational strategic decision.

- Avatar-Based Platforms (Synthesia, HeyGen): These are the enterprise workhorses for “talking-head” content. Synthesia has established itself as the secure standard, trusted by over 90% of the Fortune 100. It is pushing the envelope with “Video Agents” that allow for interactive, responsive training sessions. Conversely, HeyGen is the creator’s choice for realism. Its “Avatar IV” technology utilizes motion capture-based animations to achieve natural eye movements and fluid gestures that bypass the typical robotic feel of early models.

- Generative Cinema (Sora, Runway, Veo): These engines focus on atmosphere and B-roll. Tools like OpenAI’s Sora act as an “AI director,” maintaining narrative consistency across multiple shots, while Google Veo 3 and Runway provide the cinematic fidelity required for high-end marketing without the Hollywood price tag.

3. The “No-Edit” Pipeline for E-Commerce

For e-commerce brands, “edit fatigue” is being eliminated by “URL-to-Video” automation. Tools like AdGPT and Pollo AI allow store owners to bypass the assembly line entirely. Pollo AI distinguishes itself as a true all-in-one agent, handling the entire pipeline—from scripting and visuals to voiceovers and pacing—in a single click. It even offers a robust mobile app for creators generating viral content on the move.

The strategic advantage of A/B testing “hooks” at scale cannot be overstated. Since social media engagement relies on stopping the scroll in the first three seconds, these tools allow marketers to instantly generate and test five different opening angles for a single product. This level of granular experimentation was once reserved for massive agencies; now, any Shopify store can deploy it.

4. The Technical Debt: Glitches in the Uncanny Valley

Despite the visionary hype, 2026 hands-on tests from Manus reveal that human oversight remains non-negotiable. High-end models still suffer from technical artifacts that can break a brand’s immersion.

The Manus evaluations highlighted specific “uncanny valley” hurdles: Runway Gen-4.5 can still produce unsettling “glitching eyes” during character close-ups, while Google Veo 3 demonstrated inconsistencies during camera transitions, such as “disappearing cherry blossoms” that vanish when the framing shifts. These physics failures and robotic movements mean that while the AI does the heavy lifting, a human operator must still act as the final quality shield to fix illogical scene transitions.

5. Localization is Now a “Single-Click” Feature

The traditional localization bottleneck—hiring global voice talent and managing expensive reshoots—has been dismantled. By synthesizing re-animated lip-sync technology from DeepBrain AI with HeyGen’s real-time translation, one master video can reach over 140 languages instantly.

DeepBrain AI re-shapes a presenter’s mouth frame-by-frame to match new audio, ensuring that a speaker in London looks perfectly natural speaking Spanish or Mandarin.

“Agencies serving global clients can turn one master video into five localized versions before lunch, with no reshoots, voice-over fees, or blown deadlines.”

6. Repurposing as a Content Superpower

The most efficient brands treat every long-form recording as a library of “golden nuggets.” Tools like Pictory and OpusClip have turned content recycling into a high-science superpower. These platforms do not just “clip” video; they strategically analyze the footage to identify engagement peaks and viral-ready segments.

This allow brands to “squeeze more value” out of a single 45-minute webinar, automatically cropping and captioning the highlights for TikTok, Reels, and Shorts. It transforms one hour of raw recording into an entire month of social media presence.

7. Commercially Safe AI: The Legal Shield

As generative media becomes ubiquitous, the risk of copyright infringement has become a primary enterprise concern. This has fueled the trend of “commercially safe” AI, led by Adobe Firefly.

Unlike models trained on random web-scraped data, Firefly is trained on licensed Adobe Stock and public domain material. For agencies, the core value proposition here is IP indemnification. This legal shield protects the agency and the client from the nightmare of copyright claims, making it a standard requirement for professional brand environments in 2026.

Conclusion: The New Era of the Director-Operator

The shift is definitive: the creator’s role has migrated from “manual labor” to “creative orchestration.” Industry data forecasts the AI video market will grow from $534 million to over $2.5 billion by 2032. In this world, the technical difficulty of production has dropped to near zero, but the value of a high-quality idea has never been higher.

In a world where everyone can generate a masterpiece in the time it takes to drink a coffee, what will be the true value of your next creative idea?